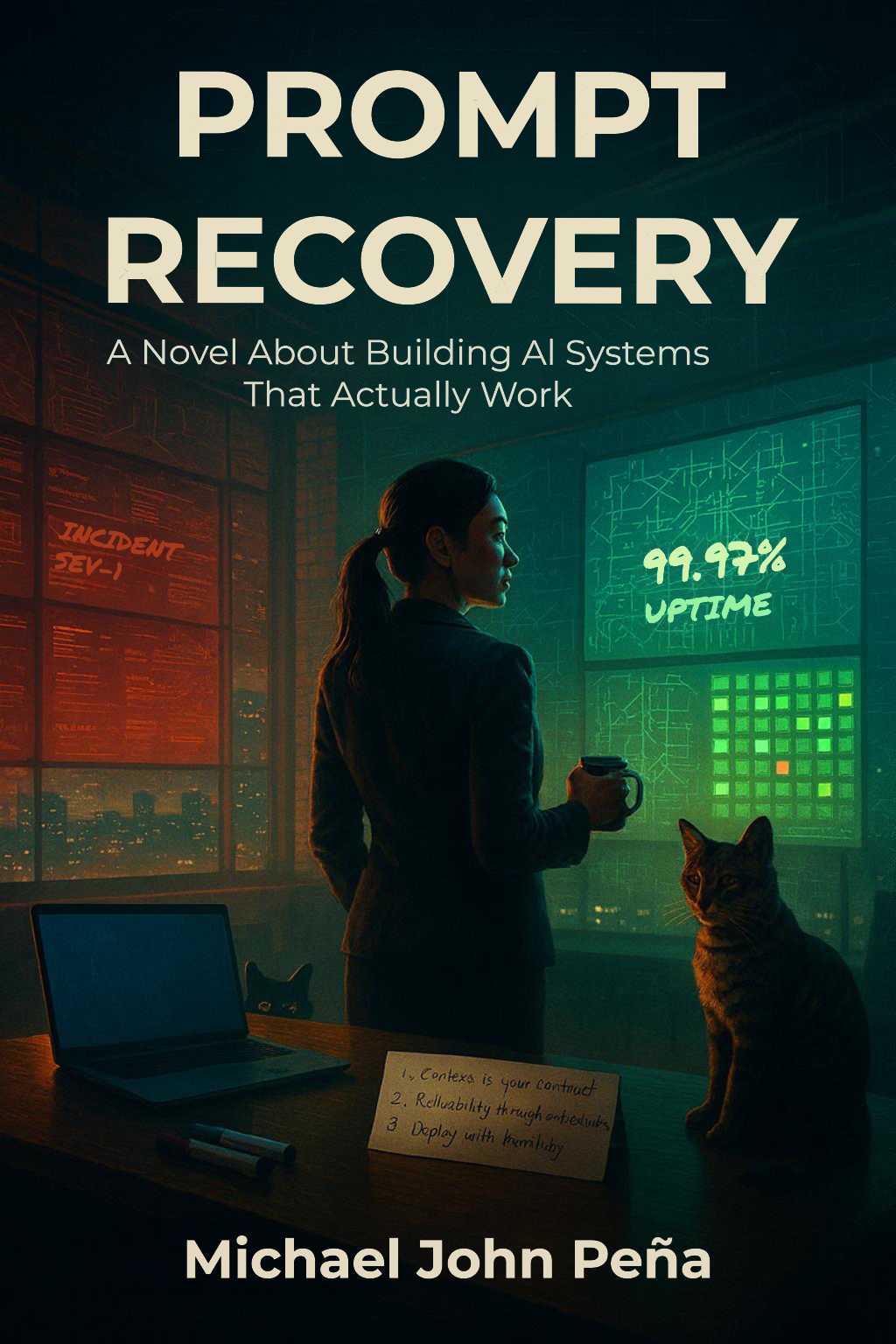

Day one.

Everything is already on fire.

Sarah Chen is a seasoned engineering leader who has just been hired to run the AI platform at AutoScale — a fast-growing startup whose crown jewel, AgentOS, is held together by one exhausted engineer, duct tape, and good intentions.

Sarah's Slack notification sounded at 11:47 PM. Then again at 11:48. By 11:50, her phone was buzzing with the intensity of a trapped bee. She knew what that meant: production was on fire, and she was about to do something reckless about it.

She untangled herself from the couch, dislodging Kernel from her lap and earning a look of betrayal that only a cat could deliver with such precision.

— Chapter 1: Into the Fire

She has 90 days before the board pulls the plug. What follows is a crash course in building AI systems that survive contact with reality — told through the lens of one team's fight to turn chaos into something they can be proud of.